错误

今天运行 Spark 任务时报了一个错误,如下所示:

WARN scheduler.TaskSetManager: Lost task 9.0 in stage 3.0 (TID 69, xxx.xxx.xxx.com, executor 3): org.apache.spark.SparkException: Task failed while writing rows.at org.apache.spark.sql.execution.datasources.FileFormatWriter$.org$apache$spark$sql$execution$datasources$FileFormatWriter$$executeTask(FileFormatWriter.scala:254)at org.apache.spark.sql.execution.datasources.FileFormatWriter$$anonfun$write$1.apply(FileFormatWriter.scala:169)at org.apache.spark.sql.execution.datasources.FileFormatWriter$$anonfun$write$1.apply(FileFormatWriter.scala:168)at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:90)at org.apache.spark.scheduler.Task.run(Task.scala:121)at org.apache.spark.executor.Executor$TaskRunner$$anonfun$10.apply(Executor.scala:402)at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1360)at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:408)at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.NullPointerExceptionat java.lang.System.arraycopy(Native Method)at org.apache.hadoop.hive.ql.io.orc.DynamicByteArray.add(DynamicByteArray.java:115)at org.apache.hadoop.hive.ql.io.orc.StringRedBlackTree.addNewKey(StringRedBlackTree.java:48)at org.apache.hadoop.hive.ql.io.orc.StringRedBlackTree.add(StringRedBlackTree.java:55)at org.apache.hadoop.hive.ql.io.orc.WriterImpl$StringTreeWriter.write(WriterImpl.java:1211)at org.apache.hadoop.hive.ql.io.orc.WriterImpl$StructTreeWriter.write(WriterImpl.java:1734)at org.apache.hadoop.hive.ql.io.orc.WriterImpl.addRow(WriterImpl.java:2403)at org.apache.hadoop.hive.ql.io.orc.OrcOutputFormat$OrcRecordWriter.write(OrcOutputFormat.java:86)at org.apache.spark.sql.hive.execution.HiveOutputWriter.write(HiveFileFormat.scala:149)at org.apache.spark.sql.execution.datasources.SingleDirectoryDataWriter.write(FileFormatDataWriter.scala:137)at org.apache.spark.sql.execution.datasources.FileFormatWriter$$anonfun$org$apache$spark$sql$execution$datasources$FileFormatWriter$$executeTask$3.apply(FileFormatWriter.scala:242)at org.apache.spark.sql.execution.datasources.FileFormatWriter$$anonfun$org$apache$spark$sql$execution$datasources$FileFormatWriter$$executeTask$3.apply(FileFormatWriter.scala:239)at org.apache.spark.util.Utils$.tryWithSafeFinallyAndFailureCallbacks(Utils.scala:1394)at org.apache.spark.sql.execution.datasources.FileFormatWriter$.org$apache$spark$sql$execution$datasources$FileFormatWriter$$executeTask(FileFormatWriter.scala:245)...

ORC 仅在 HiveContext 中受支持,但这里使用 SQLContext。

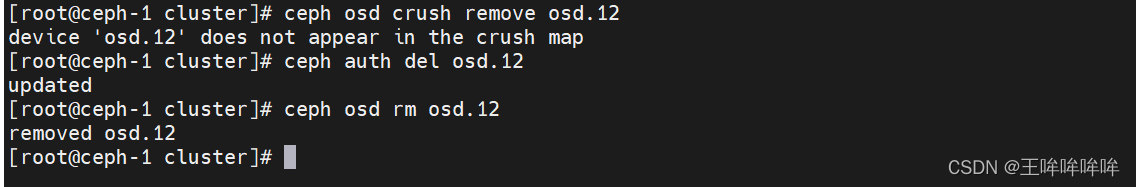

解决办法

SQLContext 存在一些问题,尝试使用 HiveContext。 使用以下配置来解决:

spark.sql.orc.impl=native

native 和 hive 二选一,native 是基于 ORC1.4,表示使用 Spark SQL 提供的本地ORC实现方式。hive 是基于 Hive 的 ORC1.2.1